Whether We Can and Should Develop Strong AI: A Survey in China

Authors:

- Yi Zeng, Professor at the Institute of Automation, Chinese Academy of Sciences, serving as Deputy Director of the Brain-inspired Intelligence Laboratory, and Director of the International Research Center for Artificial Intelligence Ethics and Governance, and Founding Director of Center for Long-term Artificial Intelligence. His research interests are Brain-inspired Artificial Intelligence, AI Philosophy, Ethics and Governance, and AI for Sustainable Development.

- Kang Sun, Research Fellow at the Center for Long-term Artificial Intelligence. His research interests focus on AI Ethics and Governance.

Foreward:The purpose of this survey is to objectively present the different opinions and situations of scholars and practitioners in different fields regarding the feasibility and necessity of developing Strong AI based on Chinese participants. This survey aims to provide a reference for relevant research and further discussions among scholars and the general public with different backgrounds. The survey was conducted in 2021 from May to July. At that time, concepts such as “Strong AI” and “Artificial General Intelligence” were not as widely recognized and discussed as they are at the time of the official release of this report. Therefore, the survey results can better reflect the original understanding of the survey participants’ original thoughts.Acknowledgement:The survey was mainly conducted on young and middle-aged AI-related students and scholars, as well as participants from other fields, with 1032 valid questionnaires collected. Researchers from the International Research Center for AI Ethics and Governance at the Institute of Automation of the Chinese Academy of Sciences and Center for Long-term Artificial Intelligence (CLAI) participated in the survey design, implementation, and results analysis. During the survey, 63 experts were invited to participate through invitation from the Beijing Academy of Artificial Intelligence (BAAI), the China Computer Federation (CCF), the Chinese Association for Artificial Intelligence (CAAI), and the Chinese Association of Automation (CAA). We sincerely thank the above-mentioned organizations and experts for their support in this survey. Special thanks to Professor Tiejun Huang, Dean of BAAI, for participating in the initial discussions and help on outreaching to different organizations.

I. Introduction

Artificial Intelligence (AI) is a disruptive technology that has the potential to change the world. People accept AI as a tool to serve humanity and expect it to continue to develop to achieve greater efficiency. However, the continuous development of AI technology to achieve Strong AI has sparked debates and discussions. Currently, the progress of AI is mainly focused on completing specific types of intelligent tasks or solving specific types of intelligent problems, which cannot be automatically extended to solve other types of tasks. The important direction for the future development of AI is to achieve an intelligent system that can adapt to external environmental challenges. However, there are still different opinions on whether it is necessary to develop a general AI that can perform all tasks that humans can and even superintelligence that surpasses human intelligence. To promote the orderly development of the next generation of AI and to avoid potential risks, we conducted a special survey on “whether we can and need to achieve Strong AI”. For this survey, the following conceptual agreements have been made:(1) Specialized Artificial Intelligence: also known as Narrow AI, refers to artificial intelligence that can complete specific intelligent tasks or solve specific intelligent problems, but cannot automatically generalize to achieve other types of intelligence.(2) Autonomous Intelligence: refers to intelligence that can adapt to external environmental challenges at different levels. Animals on Earth possess different levels of autonomous intelligence.(3) Autonomous Artificial Intelligence: refers to artificial intelligence that can adapt to external environmental challenges. Autonomous artificial intelligence can be similar to animal intelligence, called (specific) animal-level autonomous artificial intelligence, or unrelated to animal intelligence, called non-biological autonomous artificial intelligence.(4) Artificial General Intelligence: refers to autonomous artificial intelligence that reaches Human-level intelligence. It can adapt to external environmental challenges and complete all tasks that humans can accomplish, achieving human-level intelligence in all aspects. It is also known as Human-Level AI.(5) Superintelligence: refers to Artificial General Intelligence that has surpassed humans in all aspects of human intelligence.(6) Strong AI: refers to the combination of Artificial General Intelligence/Human-Level AI and Superintelligence.

II. Key Findings

Through this survey and analysis of relevant data, the following key findings were obtained:1. Most Participants generally believe that Strong AI can be achieved.Most of the Participants, including both AI professionals and people from other fields, believe that Strong AI can be achieved.2. More participants believe that Strong AI cannot be achieved before 2050, and around 90% of participants believe after 2120 would be even more promising. Most Participants generally believe that Strong AI cannot be achieved within the next 10 years, with more of them considering that there is a greater possibility of achieving Strong AI after 2050. Around 90% of participants believe after 2120 (roughly 100 years later) would be even more promising, especially for professional AI researchers. This is with some delay compared to survey results outside China.3. Most Participants generally believe that Strong AI should be developed. Most of the Participants believe that the development of Strong AI should be pursued, with AI professionals and natural science participants expressing more confidence in this regard than scholars in humanities and social sciences.4. Most Participants believe that humans are willing to coexist harmoniously with Strong AI, and that Strong AI can coexist harmoniously with humans.The survey considered the possibility of harmonious coexistence from two perspectives: whether humans are willing to live in harmony with Strong AI, and whether Strong AI can coexist harmoniously with humans. Based on the responses of the Participants, it was found that most of them generally believe that both humans and Strong AI can proactively work together towards achieving harmonious coexistence.5. Most Participants believe that Strong AI will pose existential risks to humans.In public and media discussions, there is no consensus among people from different fields on the development of Strong AI, and even in related academic and industrial community, there are constant debates. The core issue that triggers these debates is whether Strong AI will pose an existential risk to humanity. The survey also reflects the Participants’ concerns about this issue, with most of them believing that Strong AI will indeed pose an existential risk to humanity.6. Participants generally keep close eyes on the ethical issues brought by Strong AI.The Participants generally believe that when answering how to develop Strong AI, it is necessary to investigate and find ways to solve the ethical issues brought about by Strong AI. Concerns on ethical issues are the main reason that affects participants’ views on whether or not Strong AI should be developed.7. Most Participants generally believe that Strong AI will assist in human development.The Participants generally believe that Strong AI will bring about more efficient production and a more convenient way of life. This is also the main reason why Participants accept and believe that Strong AI should be developed and can be achieved.8. AI scholars and professionals are more optimistic about the development of Strong AI than participants from other fields.The survey reflects that even though Participants are concerned about the potential existential risks that Strong AI may bring, they also show recognition for the development of Strong AI and most people believe that it can be achieved. AI scholars and practitioners are more optimistic on this compared to participants from other fields.

III. Research Methodology

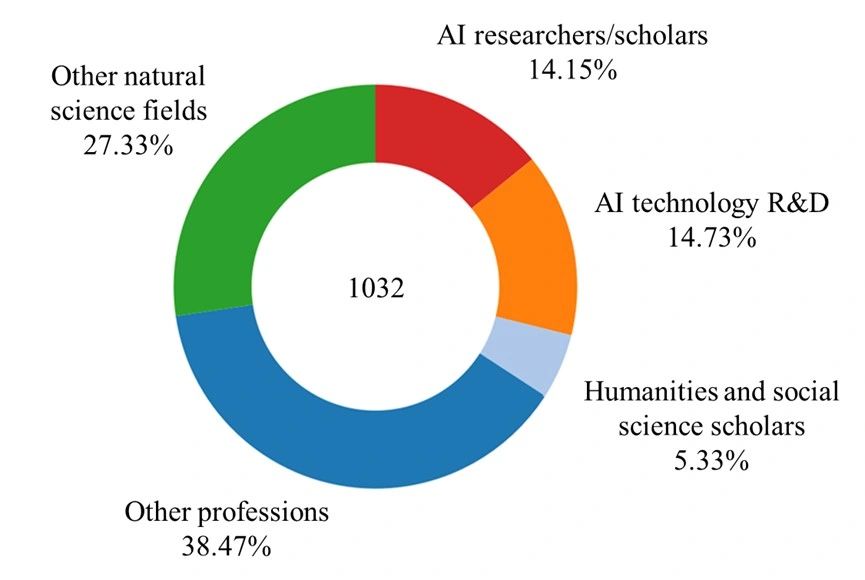

The survey is composed of 13 questions, and the research team publicly released the survey, which could be answered on the platform using a smartphone or computer. In addition, the research team shared the survey through WeChat Moments, friend groups, and other means. The survey result and opinion presentations are completely anonymous, and participants could choose to answer all or part of the questions according to their personal preferences.The survey was conducted from May 18th, 2021 to July 14th, 2021. A total of 1,049 surveys were collected, with 17 invalid questionnaires excluded, resulting in a total of 1,032 valid survey responses. Among the Participants, 14.15% are AI scholars, 14.73% are from AI technology research and development, 27.33% are from other natural science fields, 5.33% are scholars from the humanities and social sciences, and 38.47% are from other professions. Among them, 63 Participants hold honors such as fellows or distinguished scholars from the Chinese Association for Artificial Intelligence (CAAI), China Computer Federation (CCF), Chinese Association of Automation (CAA), and Beijing Academy of Artificial Intelligence (BAAI) scholars, as shown in Figure 1.

Figure 1. Participants’ professions of the Strong AI Survey in China

IV. Research Analysis and Conclusion

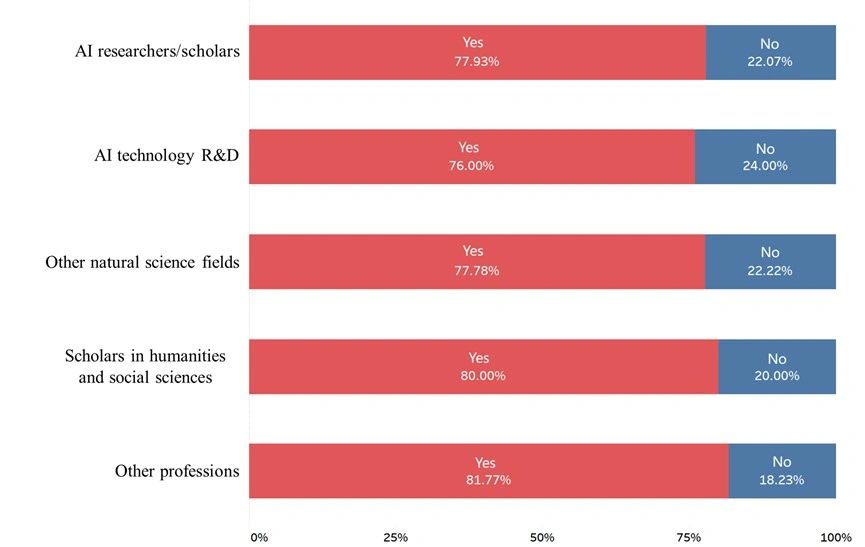

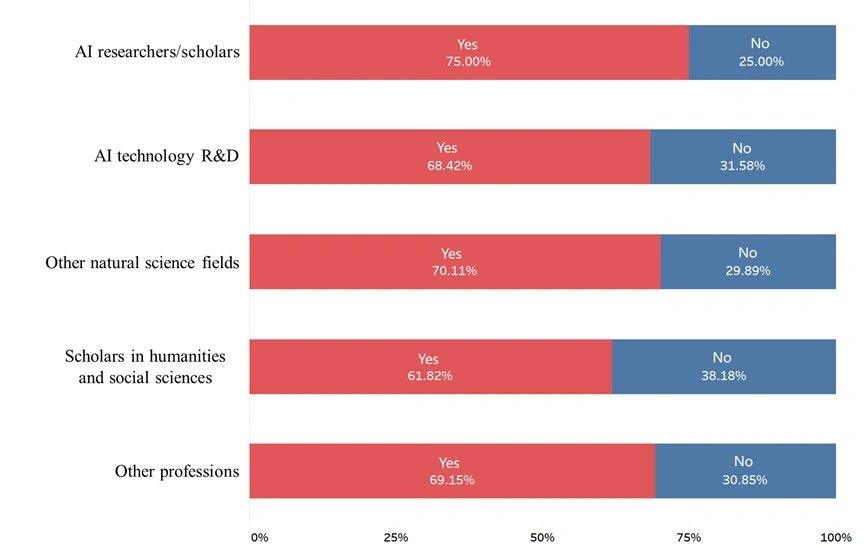

1. Most Participants believe that Strong AI can be realized.There are continuous debates in the academic community regarding the realization of Strong AI [Vincent 2014, Oren 2016, Toby 2018]. According to the survey data shown in Figure 2, the majority of Participants are confident that Strong AI can be achieved. This belief is held by over 76% of Participants from both the AI research and development community, and there is no significant difference between scholars from the rest of the fields, such as in humanities and social sciences, other natural sciences, and participants from other professions. In addition, this result is relatively consistent with the result shown in [Oren 2016], where 80 AAAI Fellows were asked for a similar question and 75% of them think Superintelligence can be realized. Although participants generally believe that Strong AI can be achieved, when asked about the reasons why it cannot be achieved, participants are with views such as “current technology cannot achieve it”, “consciousness and emotions are key to Strong AI, and machines or technology cannot generate or achieve consciousness or emotions”, “Strong AI is just imitation of humans, and the complexity of human brain is unsolvable by technology”, and “risk, ethical, and public opinion issues”. Thus, even though most participants believe that Strong AI can be achieved, they also give reasons what may affect its realization. Overall, the reasons for believing that Strong AI cannot be achieved mainly fall into two categories: the first is that technology cannot achieve it, and the second is that its development or implementation should be restricted due to significant risks and ethical concerns.

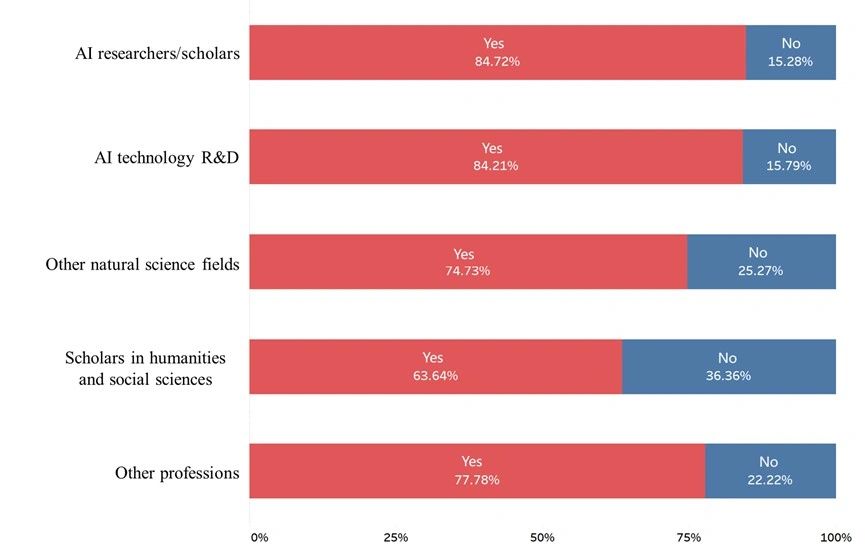

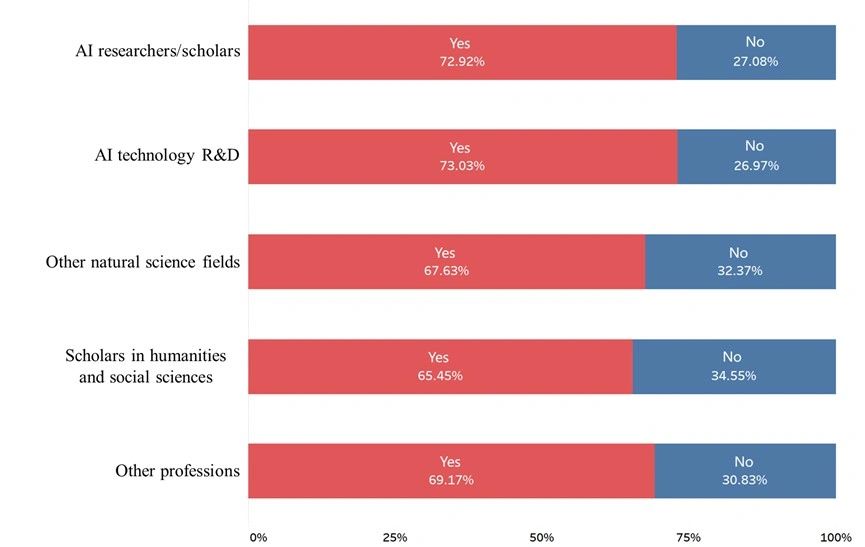

Figure 2. Do you think Strong AI can be achieved?2. Most participants believe that Strong AI should be developed.According to Figure 3, Participants generally believe that Strong AI should be developed. Among the participants, 63.64% of the scholars in humanities and social sciences believe that it should be developed. Although more than half of the scholars in the humanities and social sciences believe that it should be developed, this percentage is the lowest among all professions surveyed. This indicates that scholars in the humanities and social sciences are more concerned about the ethical and safety issues of Strong AI compared to those in other fields.

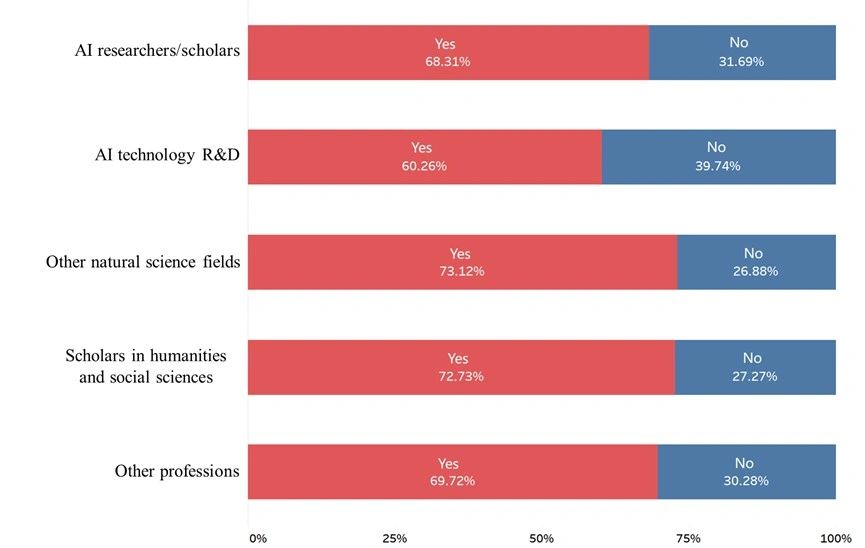

Figure 3. Do you think Strong AI should be developed?3. Most participants generally believe that humans are willing to coexist harmoniously with Strong AI, and also believe that Strong AI can coexist harmoniously with humans.According to the survey data, most participants believe that humans are willing to coexist harmoniously with Strong AI (68.51%), instead of cannot. At the same time, a similar number of participants (68.99%) believe that Strong AI can coexist harmoniously with humans, as shown in Figures 4 and 5. Among the participants, scholars in the humanities and social sciences had a slightly lower percentage compared to those in other fields. The overall result indicates that participants have similar levels of confidence in both “humans coexisting harmoniously with Strong AI” and “Strong AI coexisting harmoniously with humans”. Some Participants explicitly stated that the key to whether the two can coexist harmoniously will mainly rely on the human side.

Figure 4. Do you think human are willing to coexist harmoniously with Strong AI?

Figure 5. Do you think Strong AI can coexist harmoniously with human?4. Most participants believe that Strong AI will pose existential risks to human. There has been no consensus among people from various sectors of society on the development of Strong AI. Academics, industry professionals, and the public have been constantly debating and expressing their opinions on this issue. The core issue that triggers this debate is whether or not Strong AI will pose existential risks to humanity. As shown in Figure 6 of the survey, participants’ concerns about this issue can be reflected, with most of them believing that Strong AI will indeed pose existential risks to humanity. Relatively, the percentage of participants who are convinced of this is relatively higher among those in non-AI fields than those in the AI fields.

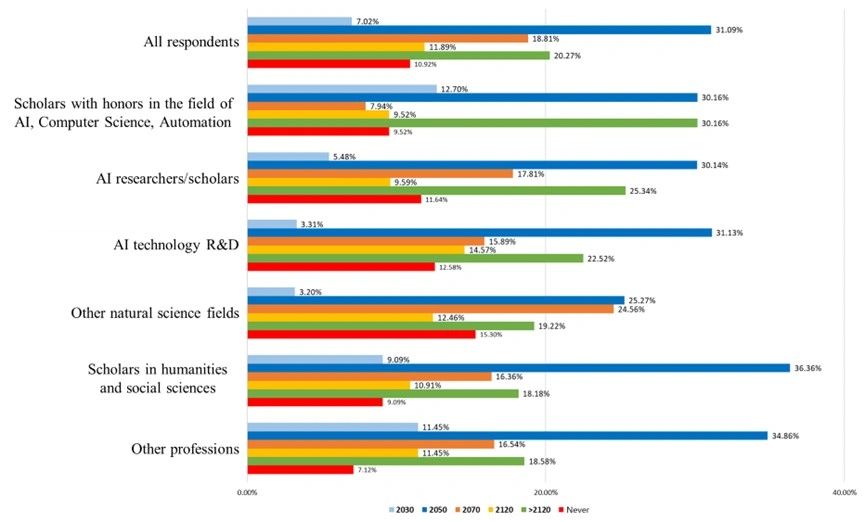

Figure 6. Do you think Strong AI poses existential risks to human?5. More participants believe that Strong AI cannot be achieved before 2050, and around 90% of participants’ choices are “after 2120” or before. As shown in Figure 7, most participants generally believe that Strong AI can be achieved, and the possibility of achieving it will be greater at least by 2050 (And 2050 has the highest percentage of participants). The second most likely time for achieving strong artificial intelligence is after 2120. Adding up the results, it can be seen that 89.08% of all participants selected the time point “after 2120” and before, for achieving strong AI, while the corresponding data for AI scholars and AI technology R&D was 87.88%. This view is somewhat delayed compared to research conclusions outside of China, as demonstrated by the results of the survey conducted by [Toby 2018], which showed that 90% of AI scholars believe this time is around 2109, and 90% of automation and robotics scholars lean towards 2118. This partially indicate that Chinese scholars may be less positive for the time when Strong AI come to realization. However, survey data shows that the proportion of scholars and researchers in the fields of AI, technology development, and other natural sciences who believe that Strong AI can never be achieved is higher than that of scholars in the humanities and other professions. This reflects that a certain proportion of researchers and industry professionals engaged in research and development of fields such as artificial intelligence and computer science believe that there is a huge gap between current artificial intelligence technology and the achievement of Strong AI.

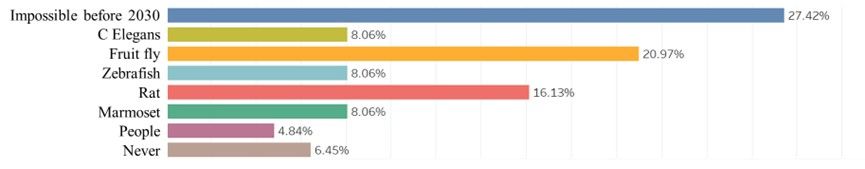

Figure 7. When do you expect Strong AI to be developed?6. More than half of the Participants who received academic honors (from CAAI, CCF, CAA or as BAAI scholars) believe that autonomous AI at a certain level can be achieved before 2030. Among the Participants who have received academic honors, 27.42% believe that autonomous AI cannot be achieved before 2030. 20.97% believe that autonomous AI can reach the level of fruit flies at most before 2030, while 16.13% believe it can reach the level of Rat, 8.06% believe it can reach the level of marmosets, 8.06% believe it can reach the level of zebrafish, and 8.06% believe it can reach the level of C.elegans. Only 4.84% believe it can reach the level of humans, and 6.45% believe it is impossible to achieve. as shown in Figure 8.

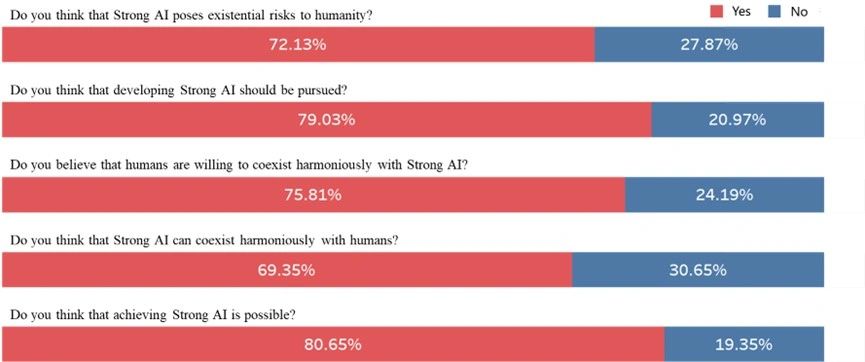

Figure 8. Participants who have received academic honors expect that by 2030, autonomous AI can reach the level of which animal at most?7. Most Participants who received academic honors (from CAAI, CCF, CAA or as BAAI scholars) believe that Strong AI can be achieved. As shown in Figure 9, 80.65% of the participants who received academic honors believe that Strong AI can be achieved. However, other participants who also received academic honors believe that there are difficulties or even impossibilities in achieving it, with reasons such as “the mechanism of human intelligence formation cannot be developed by current scientific research methods”, and “the foundation of computer science has not undergone a fundamental change, it is still based on mathematical logic and cannot reflect the probabilistic characteristics of human society. In addition, the interaction between natural environment and humans will lead to continuous progress of humans, which cannot be reflected by Strong AI”.72.13% of Participants who received academic honors believe that Strong AI poses a risk to human existence (the data for all participants is 68.41% of participants believe that Strong AI poses a risk to human existence). They believe that Strong AI may challenge or even destroy humanity, with reasons such as “lack of trustworthy guarantees, development of self-awareness capabilities, inability for human to constrain their behaviors, competition with human for resources, attacking human who do not share its ideology, and its unlimited lifespan, unpredictability, and more unknowns, etc.”79.03% of Participants who received academic honors believe that Strong AI should be developed (the data for all participants is 77.13% of Participants believe that Strong AI should be developed). Reasons include “autonomously expanding human intelligence, replacing humans to perform advanced intellectual tasks, replacing humans to engage in more sophisticated and meticulous work, greatly improving productivity and efficiency, reducing human workloads, better assisting and serving humans, helping humans prevent natural disasters, achieving perfect understanding and transcending of oneself, enhancing the overall survival and development capabilities of humans, and promoting humans to face the future and explore the universe”.69.35% of the participants who received academic honors believe that “Strong AI can coexist harmoniously with humans”, while 75.81% believe that “humans are willing to coexist harmoniously with Strong AI” (data for all participants: 68.51% of participants believe that Strong AI can coexist harmoniously with humans, and 68.99% of participants believe that humans are willing to coexist harmoniously with Strong AI). It is worth noting that the opinions of participants who have received academic honors regarding “humans are willing to coexist harmoniously with Strong AI” are clearly higher than the average level of the participants in this survey.

Figure 9. Responses from Participants who have received academic honors.8. Participants are generally concerned about the ethical issues brought by Strong AI.The survey asked the question “What risks do you think Strong AI poses?” through subjective responses. From the responses, the view that “worries about a series of problems caused by uncontrollability of Strong AI” was expressed the most. Because it is uncontrollable, participants are worried about “challenging humans”, “uncontrollability”, and even “destroying humanity”. Most Participants are concerned about “the problem of unemployment caused by Strong AI,” “widening the gap between rich and poor”, and “causing unfairness”. Some Participants are concerned about the views of “confronting humans”, “controlling, enslaving, and ruling humans”, “replacing humans”, and “machine tyranny”. From these viewpoints, we can see that participants are generally concerned about the ethical issues brought by Strong AI, and even participants who agree to the development of Strong AI are also concerned about this. These ethical issues are concentrated on whether Strong AI is safe and controllable, whether it will “challenge humans and harm human power”, and even “destroy humanity”.9. Most Participants positively responded on how Strong AI can assist in human development. In the survey, we asked the question “What are the benefits of Strong AI?”. 73.06% of the participants responded positively to the benefits brought by Strong AI, while 3.97% of the participants believed that “Strong AI has no benefits” or “it is not clear what benefits there will be” and “the harms brought by Strong AI outweigh the benefits”. In addition, 22.97% of respondents chose not to answer. From the questionnaire responses, the view that “replacing (or enhancing) human abilities (mental labor, repetitive work, organization and coordination, etc.) and making intelligence, automation more comprehensive, thereby greatly improving productivity” was expressed the most. The views of “making life more convenient”, “making up for human shortcomings”, “engaging in dangerous work”, and “exploring unknown fields” are also significant. Many Participants expressed views such as “promoting the development of technology, economy, society, and humanity, making humans more free, and liberating humans”. A few participants expressed concerns that “Strong AI will make humans lazier”. A few participants expressed the belief that “there are no benefits” and “the benefits brought by Strong AI can also be achieved through other means”. From these responses, it can be seen that the majority of respondents responded positively to the benefits brought by Strong AI, which can be considered as a key component of their acceptance and belief in the development of Strong AI. However, we also need to recognize that a small percentage of respondents expressed clear resistance, believing that Strong AI is more harmful than beneficial, or even has no benefits at all. 10. AI scholars and practitioners are more optimistic about the development of Strong AI compared to participants in other fields.The questionnaire survey reflects that even if Participants are worried about the risks of Strong AI to human existence, they have also shown recognition of the development of Strong AI and the realization of Strong AI. Among them, AI scholars and practitioners and participants with academic honors have shown more optimistic and positive performance.

V. Conclusion and Recommendations

The opinions expressed by the academic and industries regarding the future of AI include both sides, namely, “positive for development” and “showing concerns for potential risks”. Such as concerns on “AI posing a threat to human”, and “AI weapons posing a threat to humanity [Miriam 2014, Elizabeth 2015, CATHERINE 2017, CATHERINE 2019]. These different views further confirm that society has both concerns and expectations for the future of AI.In this survey, some of the Participants who received academic honors believed that there is still a lack of technology to achieve Strong AI, starting from the current technological status. They believe that “many fundamental issues and computing frameworks have not been resolved”; “so far, humans know very little about the essence of human intelligence, and the biological and cognitive foundations are far from established. The current difference between computer principles and biology is too great”; “the mechanism of human intelligence formation cannot be developed by the current scientific research methodology”; “the foundation of computer science has not undergone a fundamental change, and currently it is only mathematical logic, which cannot reflect the probabilistic characteristics of human society. In addition, the interaction between the natural environment and humans will also lead to continuous progress, which cannot be reflected in Strong AI”. We believe that although current AI technology is not yet sufficient to achieve Strong AI, we still need to investigate and actively respond to the ethical issues that Strong AI may bring, because the methods that can solve these problems cannot guarantee that they will be proposed and well prepared before the arrival of Strong AI. The exploration and research of ethical issues related to Strong AI is not limited to scholars and practitioners of AI or humanities. As this issue concerns the future of humanity, the participation of the whole society is also necessary. The AI industry, academia and research community should have an interdisciplinary perspective, maintain a proactive and forward-thinking grasp of the future of AI, and engage in practices and reflections. Conducting ethical research on Strong AI before it is implemented is not groundless anxiety, and we must always ensure that Strong AI develops towards safety, controllability, and trustworthiness. Strong AI should not and cannot become a threat to humanity.Based on the research, it can be seen that society both anticipates and worries about the development of Strong AI. The survey data shows that most people believe that Strong AI should be developed and that it will be achievable in the future, but they also express concerns about the ethical issues it may bring. While some scholars and social figures warn that Strong AI is dangerous and should not be developed, and many scholars believe that existing technology methods are insufficient to achieve human intelligence, more people believe in the future potential of Strong AI. Preventing the development of Strong AI does not align with the views of the majority in the survey, as people look forward to the benefits it may bring, while we acknowledge the potential risks of Strong AI to humanity, and we must not ignore the existential risks it may bring. The AI industry, academia, and society should aim to use technology for good, deeply investigate and actively respond to the risks brought by the development of Strong AI, pay attention to possible problems and ethical impacts that may arise in the future, and recognize that the development of Strong AI is closely related to the future of all humanity. If Strong AI does come true, all countries in the world should not use Strong AI for violent behaviors, such as weapons, and Strong AI should not become a tool for the strong to bully the weak, but rather should empower the achievement of global harmonious coexistence and sustainable development.We cannot accurately predict the future of Strong AI, whether from a scientific and technological or humanistic perspective. However, we must constantly remind ourselves of the purpose of developing Strong AI and the risks it may bring to us. We should highly value the ethical issues of Strong AI and be proactive in exploring and preparing for potential risks, conducting early exploration and preparation for the potential risks that may arise in the future.

References:

[Vincent 2014] V. C. Müller, N. Bostrom. Future progress in artificial intelligence: A survey of expert opinion. Fundamental Issues of Artificial Intelligence, V. C. Müller, Ed., Berlin, Germany: Springer, pp. 555–572, 2014.[Toby 2018] Toby Walsh. Expert and Non-expert Opinion About Technological Unemployment. International Journal of Automation and Computing. 2018.[Oren 2016] Oren Etzioni. No, the experts don′t think super intelligent AI is a threat to humanity. MIT Technology Review, Massachusetts Institute of Technology, Ed., Cambridge, MA, USA: Massachusetts Institute of Technology, 2016.[Catherine 2017] Catherine Clifford. Facebook CEO Mark Zuckerberg: Elon Musk’s doomsday AI predictions are ‘pretty irresponsible’. July 24th, 2017. https://www.cnbc.com/2017/07/24/mark-zuckerberg-elon-musks-doomsday-ai-predictions-are-irresponsible.html[Catherine 2019] Catherine Clifford. Bill Gates: A.I. is like nuclear energy — ‘both promising and dangerous’. March 26th, 2019. https://www.cnbc.com/2019/03/26/bill-gates-artificial-intelligence-both-promising-and-dangerous.html[Elizabeth 2015] Elizabeth Peterson. Stephen Hawking on Reddit: Ask Him a Question on Artificial Intelligence. July 28, 2015. https://www.livescience.com/51668-stephen-hawking-reddit-ama.html[Miriam 2014] Miriam Kramer. Elon Musk: Artificial Intelligence Is Humanity’s ‘Biggest Existential Threat’. October 28, 2014. https://www.livescience.com/48481-elon-musk-artificial-intelligence-threat.html