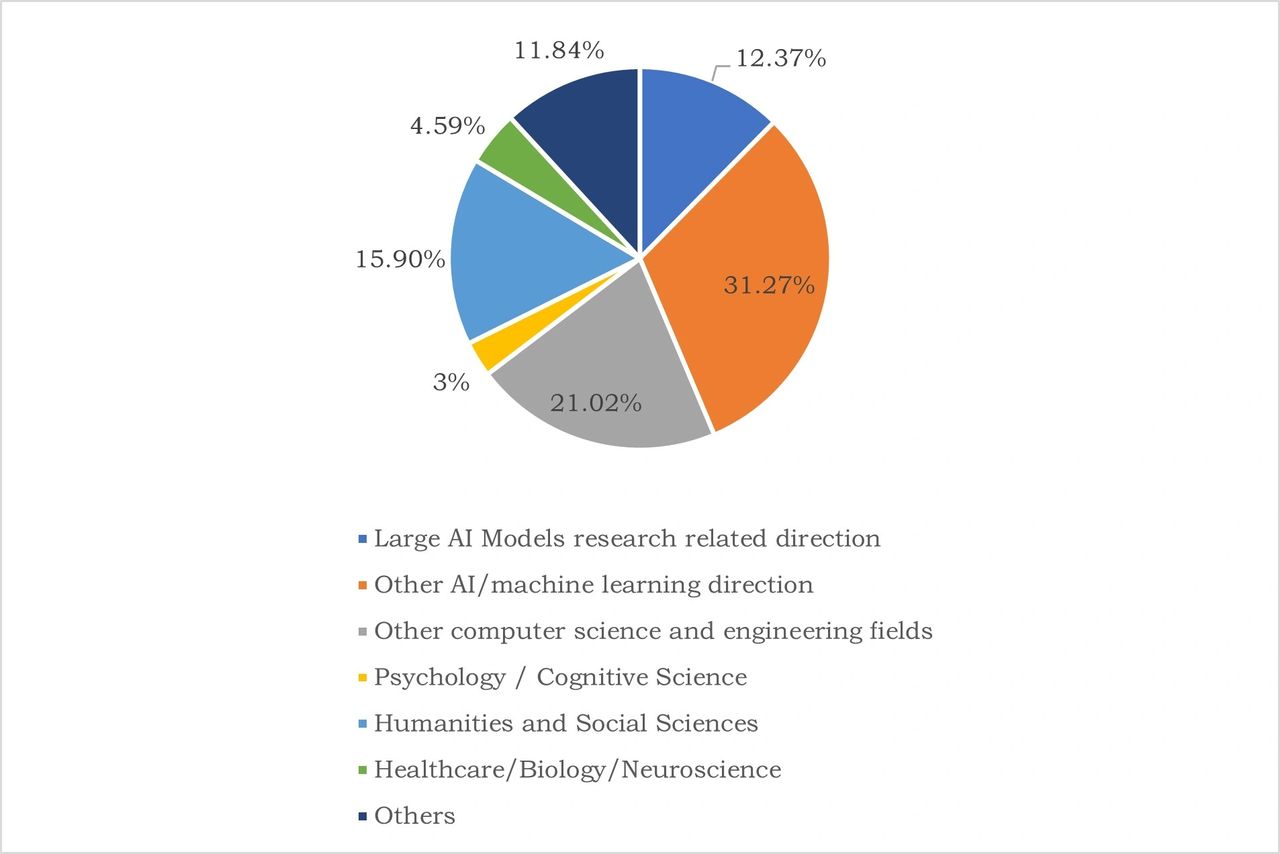

On March 29th, 2023, the Future of Life Institute initiated the “Pause Giant AI Experiments: An Open Letter”. Although the open letter received support from numerous international scholars, industry leaders, and some political representatives, the international community expressed complementary opinions on the open letter through various channels. While some Chinese professionals participated in signing the open letter, there has yet to be a concentrated study and analysis of the attitudes of various sectors of Chinese academia, industry, and the public towards the open letter. This research analysis aims to reflect the perceptions, attitudes, and voices of people from China regarding this open letter.This survey and analysis is by the Center for Long-term Artificial Intelligence (CLAI), and the International Research Center for AI Ethics and Governance (CAIEG). It was conducted through an online questionnaire, and as of April 4th, 2023, a total of 566 people from all over China participated in the survey. The survey was conducted anonymously, and the participants were distributed throughout most provinces in China. Figure 1 displays the distribution of the participants’ fields.

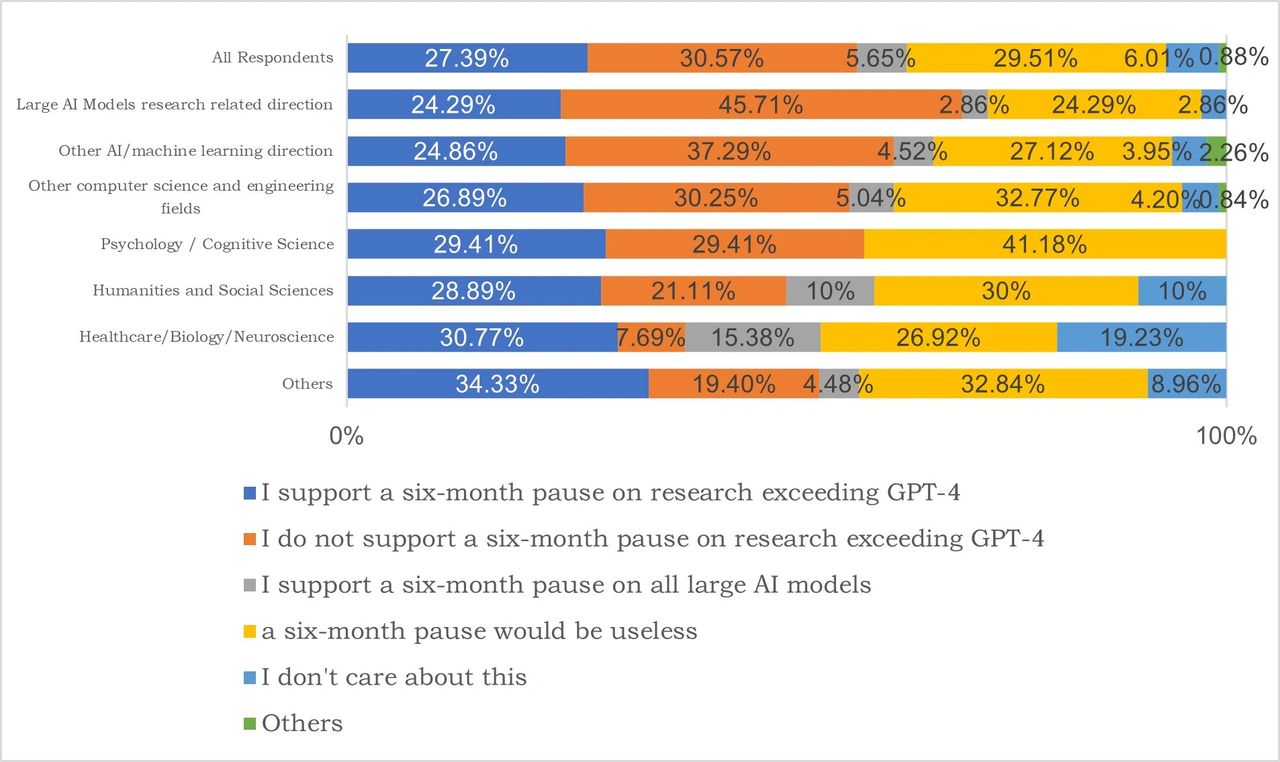

Figure 1. Field distribution of survey participants from China.92.05% of the survey respondents knew about “giant AI models” before participating in the survey. 85.16% of them frequently used or occasionally experienced products or services related to giant AI models. For participants related to AI, the number is 95.03%, and for participants in humanities and social sciences, psychology/cognitive science fields, the number is over 77%.In response to the call to “Pause Giant AI Experiments” in the open letter, 27.39% of all respondents supported pause for at least 6 months the training of AI systems more powerful than GPT-4, 30.57% did not support, and 5.65% supported a six-month pause on all lage AI model research (when combined with the supporters of a six-month pause for systems more powerful than GPT-4, the supporters and non-supporters were 33.04% and 30.57% respectively), and 29.51% did not believe a pause would be effective. There were some differences in attitudes among practitioners in different fields, as shown in Figure 2. Among the practitioners of giant AI model research and other AI/machine learning researchers, the proportion of supporters of a pause exceeded 24% (when combined with the supporters of a pause on all large AI models, it reached over 27%), as potential stakeholders with the highest interests, and was not significantly lower than that of participants in other fields. Among the non-supporters, 45.71% were practitioners in large AI models related fields, which was relatively higher than that of participants in other fields. Practitioners in psychology/cognitive science, Healthcare/Biology/Neuroscience, humanities and social sciences fields were more supportive of a pause than those in AI and computer science fields.

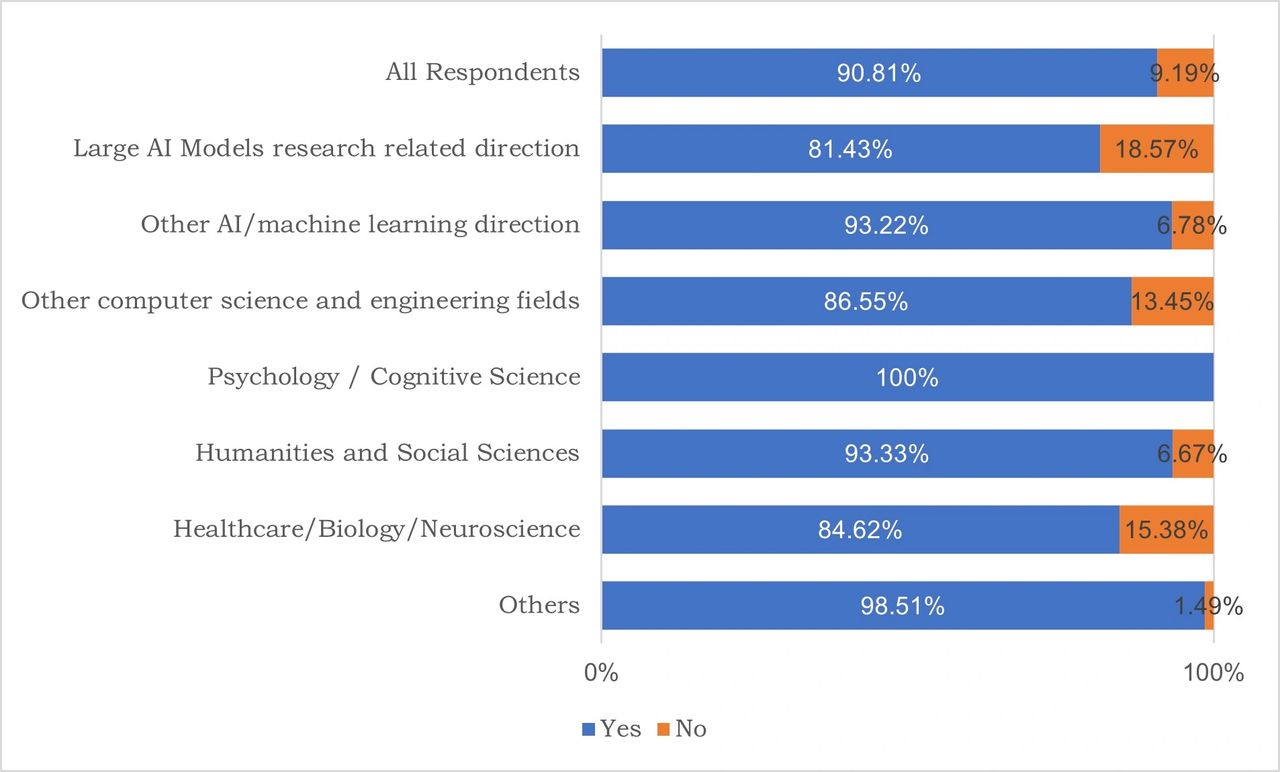

Figure 2. Chinese Participants’ attitudes on whether to support pause for at least 6 months the training of AI systems more powerful than GPT-4.Regarding the question “Do you support the ethics, safety, and governance framework being mandatory for every large AI models used in social services?”, 90.81% of participants expressed support. Considering that the question is about “every large AI model”, the data reflects the participants’ high concerns about the ethics, safety and governance issues and their determinations to take actions to address them. The proportion of researchers related to large AI models who support it reaches 81.43%, while the proportion of other AI/machine learning professionals even reaches 93.22%, which is very close to the opinion of professionals in the humanities and social sciences, indicating that the vast majority of AI researchers are aware of the risks associated with ethics, safety and are willing to take actions to support the implementation of ethics, safety, and governance frameworks for every large AI model. Although 45.71% of large AI model related practitioners do not support the pause, 81.43% support the mandatory implementation of an ethics, safety, and governance framework for every large AI model used in social services, indicating that the vast majority of large AI model related practitioners believe that implementing an ethics, safety and governance framework is the way to solve the problem. Scholars from the field of psychology/cognitive science even reached 100% agreement with the mandatory implementation of an ethics, safety and governance framework for every large AI model used in social services, as shown in Figure 3.

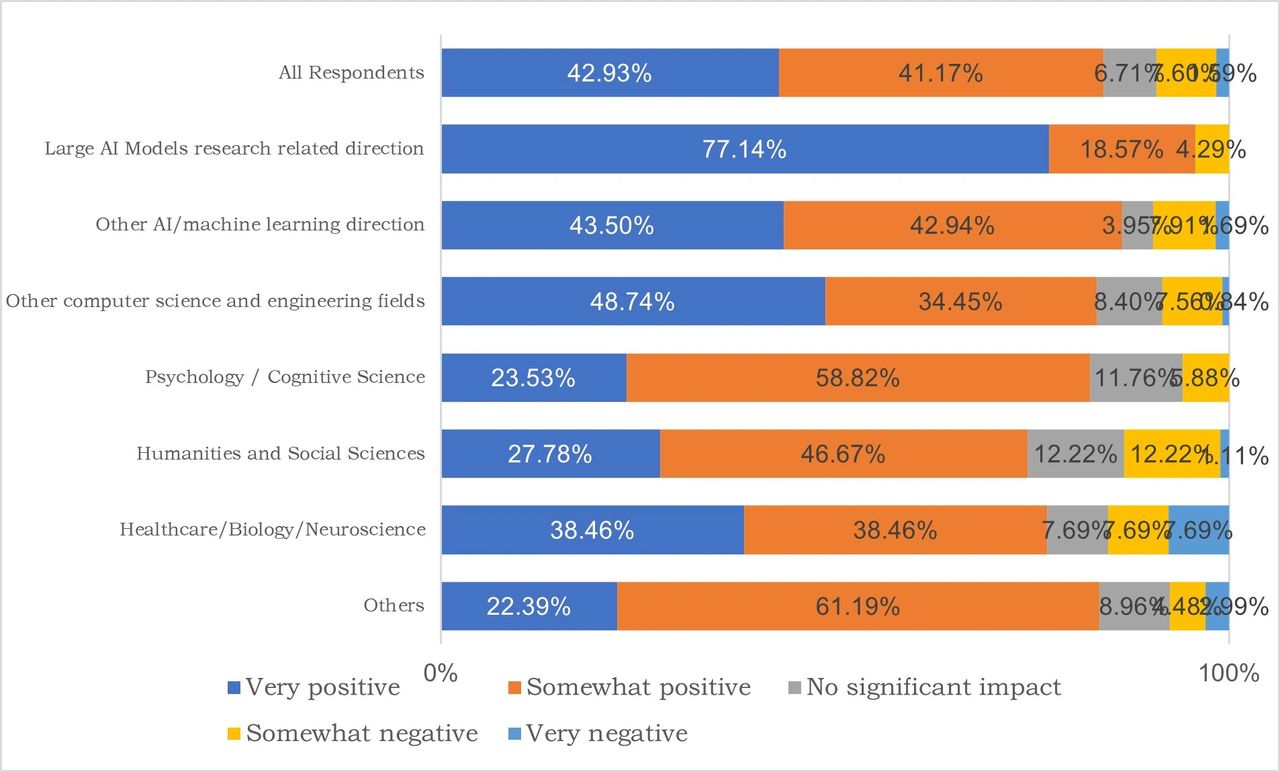

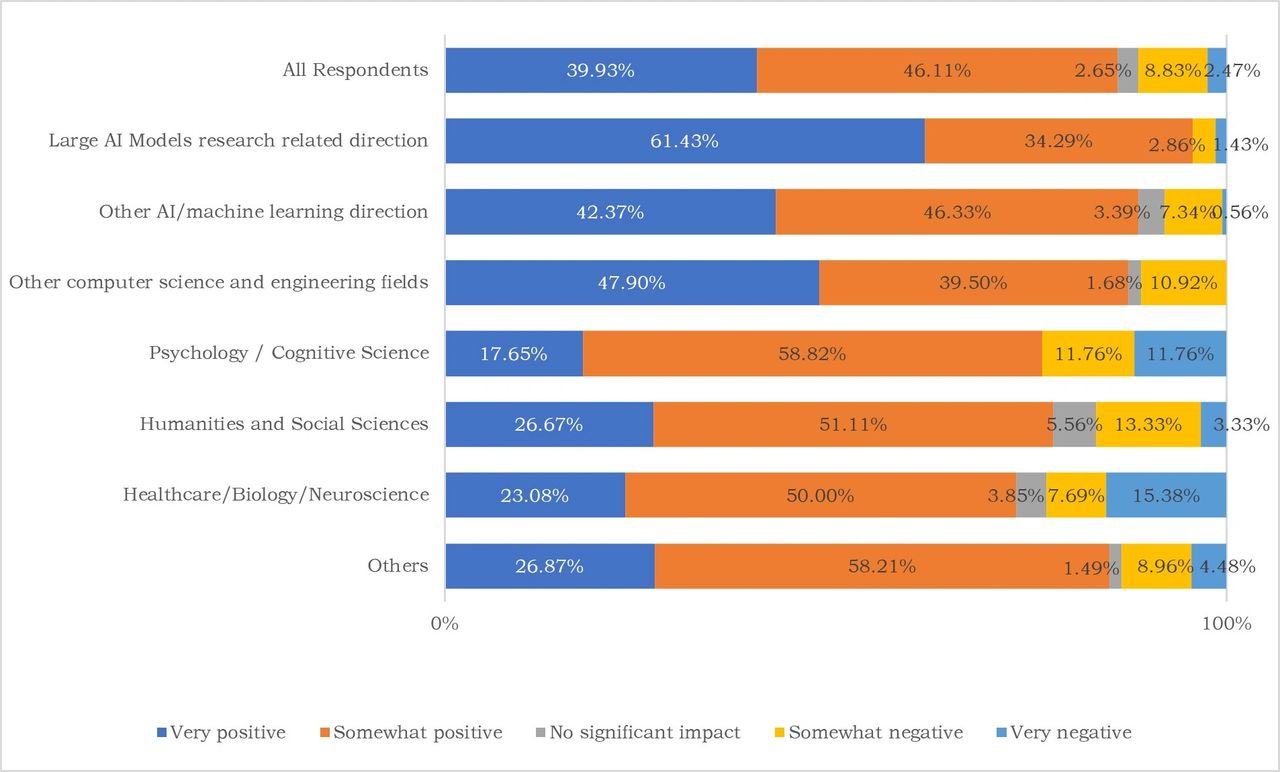

Figure 3. Chinese Participants’ attitudes towards “whether to support the implementation of ethics, safety and governance framework for every large AI model used in social services”Regarding the impact of continuous research and application of large AI models on themselves, 84.1% of participants believed it was very positive or somewhat positive. The proportion of those who felt somewhat negative or very negative was highest in Healthcare/Biology/Neuroscience field, reaching 15.38%. In terms of the impact of continuous research and application of large AI models on society, 86.04% of participants believed it was very positive or somewhat positive. The proportion of those who felt somewhat negative or very negative was highest in Psychology and Cognitive Science field, reaching 23.52%. Professionals in the fields of psychology/cognitive science, Healthcare/ Biology/ Neuroscience, and Humanities and Social Sciences expressed greater concerns about the negative impact of continuous research and application of AI models on society than those in other fields, as shown in Figures 4 and 5.

Figure 4. Attitudes towards the impact of continuous research and application of large AI models on oneself.

Figure 5. Attitudes towards the impact of continuous research and application of large AI models on society.Regarding attitudes towards “supporting a pause for at least 6 months the training of AI systems more powerful than GPT-4” and “supporting a 6-month pause on all large AI model research”, the main subjective opinions expressed are: (1) concerns about safety and ethics, specifically related to concerns about the opaque and unexplainable nature of large models such as GPT-4, concerns about lack of control, concerns about misuse and abuse, concerns about threats to human existence, concerns about replacing humans, concerns about unemployment, concerns about lack of openness, and concerns about being unprepared to face challenges, as well as concerns about what to do if large models gain consciousness or life, and concerns about future enslavement and harm to humanity, or even the destruction of the world. 0.15% of the participants believe that “large models as a tool of competition between nations are dangerous, and current development is not rational”. (2) Concerns about the unknown: “We still cannot understand large models, what if they pose a threat to humanity? Large models are seen as dangerous, with unknown dangers that are not yet recognized by humans. Concerns about the unknown and not knowing how to address these concerns.” (3) Concerns about current regulation and governance: “Development is too fast, there is currently no ability or effective method to regulate, ethical frameworks are incomplete, and there is a lack of governance systems and mechanisms.”Regarding the attitudes towards “not supporting a pause for at least 6 months the training of AI systems more powerful than GPT-4” and “not believing that a 6-month pause on large AI model research will have an effect”, the main opinions are: (1) Large AI models are beneficial to development: “Large AI models are beneficial to humanity, and the benefits outweigh the drawbacks.” (2) Both development and governance are necessary: “We should actively respond to the challenges posed by large AI models, but that does not mean we should stop developing.” (3) Stopping is not realistic, and true stopping is impossible. Some also believe that “calling for a pause is just a show, and stopping for 6 months will not bring about any change”; (4) some are not optimistic about large AI models: “Large AI models are overstated and not as good as imagined. The current AI, and even future AI, cannot possess abilities beyond human imagination. These concerns are meaningless.” Some respondents believe that “national investment should not be tilted towards large AI models; they do not believe that large models lead to real intelligence, and their performance and potential are currently not good enough”.Overall, the research results show that participants from various fields in China have different opinions on the proposal to “pause for at least 6 months the training of AI systems more powerful than GPT-4”. Approximately 30% of the participants support the proposal, 30% oppose it, and another 30% believe that it will not have a substantial effect (combining those who agree to pause all large AI models for six months). In addition, the idea that “support the implementation of ethics, safety and governance framework for every large AI model used in social services” has become a consensus among Chinese participants, showing that they recognize the crucial importance of ethics, safety and governance frameworks for large AI models. Even among the participants who do not support the proposal to pause research on giant AI models beyond GPT-4 for six months, 82.66% of them expressed support for the idea that “every large AI model that empowers social services must implement an ethic, safety and governance framework”, indicating that they believe this is the key to solving the problem.Chinese participants generally agree that the impact of large AI models on individuals and society will be relatively positive, but non-AI researchers have shown more concerns, which should be instructive for AI researchers. Although the subjective part of the survey did not include a question about proposing suggestions, many respondents expressed specific suggestions for the development of ethical and governance frameworks for large AI models. For example, they suggest paying attention to ethics research, developing ethical frameworks, building governance systems, strengthening supervision, and enacting legal standards to prevent the misuse of giant AI models. Governance should keep pace with development and cannot be too fast without effective supervision. Participants also suggested avoiding malicious competition as it can lead to monopolies and lack of transparency.The research team expresses their gratitude to all scholars, industry practitioners, and the public who participated in the survey from different regions of China, expressing their attitudes, concerns, and suggestions for the development of giant AI models. This is not only essential for the steady development and deep governance of giant AI models but also crucial for providing references to achieving “AI for good” and truly empowering development of society and ecology through AI in a positive way.

Authors:

- Yi Zeng (Center for Long-term AI; and International Research Center for AI Ethics and Governance, Institute of Automation, Chinese Academy of Sciences)

- Sun Kang (Center for Long-term AI)

- Lu Enmeng (International Research Center for AI Ethics and Governance, Institute of Automation, Chinese Academy of Sciences)

- Zhao Feifei (International Research Center for AI Ethics and Governance, Institute of Automation, Chinese Academy of Sciences)